You pull up a movie and the numbers do not add up. Rotten Tomatoes says 91 percent. IMDb says 6.9. Metacritic says 85. These are supposed to be the same film reviewed by people who watched the same footage, and they look like three different opinions on three different movies. Something is off.

Nothing is off. The three platforms were built to answer different questions, and the gaps between them are not errors in the system. They are the system telling you something specific about the film you are considering. Once you understand what each score actually measures, the disagreements stop being frustrating and start being useful.

I built Limelight partly because I kept running this calculation in my head every time I was picking something to watch, and it is a genuinely annoying thing to do at nine o'clock when you just want to sit down. But understanding the math behind each score is worth doing once, because it changes how you read them forever. Here is the full breakdown.

Why do the three scores disagree so often?

The short answer is that they are measuring different populations with different methods and calling the result by the same general name: a rating.

Rotten Tomatoes counts the percentage of approved critics who gave a film a positive review. IMDb averages out millions of general audience ratings, then applies weighting to remove manipulation. Metacritic takes a curated pool of roughly 40 to 50 top critics per film and calculates a weighted average on a 0 to 100 scale, with individual critics given different weights based on their perceived stature.

Even if every single person in all three systems had the exact same subjective reaction to a film, the scores would still differ because the systems are fundamentally different: one is a binary threshold, one is a weighted crowd average, and one is a weighted elite average. They are not three attempts to measure the same thing. They are three different measurements, and comparing them directly is like being surprised that Celsius and Fahrenheit give you different numbers for the same temperature.

The gaps become even wider because each system attracts a different audience. The critics on Rotten Tomatoes tend to skew toward prestige drama and formally adventurous cinema. The users on IMDb are disproportionately male, between 18 and 44, and genre-fluent in ways the general population is not. The critics on Metacritic are a narrow pool of established voices at major publications, weighted toward legacy media. None of those populations is a neutral cross-section of humanity, which is exactly why you want to look at all three.

How does Rotten Tomatoes actually calculate its score?

The Tomatometer is a percentage, but it is not an average. It is the share of approved critics who gave the film a positive review. Each review is classified as either fresh (positive) or rotten (negative), and the Tomatometer is the percentage that landed in the fresh column.

A few specifics that matter:

- The "Fresh" label on the Tomatometer appears when 60 percent or more of counted reviews are positive. That 60 percent is an aggregate threshold, not a per-review rule. Individual critics either self-designate their review as positive or negative, or RT's editorial team classifies mixed reviews based on context. A critic who writes "it mostly works" might land fresh; one who writes "ultimately disappointing" lands rotten. The cutoff is contextual, not mathematical, which is part of why the system is hard to game and also why two people reading the same review might classify it differently.

- The critic pool is broad. Rotten Tomatoes approves critics from a wide range of outlets, from the New York Times to mid-size regional publications and individual freelancers with approved platforms. The barrier to entry is lower than Metacritic's and the sample sizes for major releases often run into the hundreds.

- Certified Fresh requires a minimum of 80 reviews for wide-release films (40 for limited releases), a score of 75 percent or above, and at least five reviews from Top Critics. It is a consistency threshold. A film can be Certified Fresh while no single critic called it a masterpiece.

- The Audience Score is a completely separate system. It measures verified ticket buyers and general users rating the film after they watched it, and it operates on an entirely different calculation from the Tomatometer. The two numbers are often displayed side by side, which creates the impression that they should be similar, but there is no reason they would be.

The structural problem with the Tomatometer is binary collapse. A film where 80 percent of critics gave it a passing grade and 20 percent called it awful gets an 80 percent. A film where 80 percent of critics gave it a passionate four-star review and 20 percent called it the best film of the decade also gets an 80 percent. The enthusiasm is invisible. Two completely different critical receptions produce the same number.

This is not a bug in the Rotten Tomatoes sense. The platform was built to answer a specific question: did critics recommend it? The Tomatometer answers that question cleanly. It just cannot answer "how much did they recommend it," which is a different question and often the more useful one.

How does IMDb actually calculate its score?

IMDb's score is a weighted average of user ratings on a one to ten scale, but the displayed score is not a simple mean of all submitted votes. IMDb applies a proprietary algorithm specifically designed to resist manipulation: vote-stuffing, brigading, and demographic outliers are trimmed or reweighted before the final number is calculated.

IMDb is transparent about the existence of weighting but not about its specifics. The overall score is weighted to reflect something closer to a "regular" viewer than an enthusiast population. In practice, a film targeted by organized rating campaigns, either boosted by fans or tanked by detractors, typically ends up with a score closer to what neutral viewers gave it. IMDb previously displayed public demographic breakdowns by gender and age group on every film page, but removed those from the public site; they are now available only via IMDb Pro. The skew still exists in the underlying data even if it is no longer visible at a glance.

The demographic skews that remain even after weighting are worth understanding:

- IMDb's active rating population skews male. Across most titles, male voters outnumber female voters by a significant margin, sometimes three or four to one on mainstream genre releases. Films with primarily female audiences or female-centric storylines often score lower on IMDb than on other platforms for this reason.

- IMDb users are genre-fluent. The platform has deep roots in action, horror, science fiction, and franchise cinema. Someone who has logged 2,000 films on IMDb has a very different frame of reference from someone who sees 12 movies a year.

- Opening-weekend ratings skew high. The first wave of ratings on any release comes from the most enthusiastic segment of the audience: people who made a point of seeing it opening night. The score tends to drift down slightly over the following weeks as the broader audience catches up.

Despite these quirks, IMDb is often the most useful signal for genre films, franchise entries, and broad audience entertainment. The people rating action movies on IMDb know action movies extremely well. A 7.8 on a thriller means something specific when it comes from that population.

How does Metacritic actually calculate its score?

Metacritic's Metascore is a weighted average of professional critics, but with two significant differences from Rotten Tomatoes. First, each review is scored on a 0 to 100 scale rather than classified as binary fresh or rotten. A grudging three-star review might translate to a 55. A passionate four-and-a-half-star review might translate to an 88. The nuance survives. Second, Metacritic assigns each critic in its pool a weight based on their perceived prominence, so a review in the New York Times or the A.V. Club carries more weight than one from a smaller outlet.

The critic pool itself is curated and relatively small: roughly 40 to 50 reviews per major release, compared to potentially several hundred on Rotten Tomatoes. This narrowness has two effects. On big releases with broad critical coverage, the Metascore tends to be stable and informative. On smaller films with limited reviews, a single outlier opinion can move the needle noticeably, and the score should be read with more caution.

The Metascore also has a useful secondary indicator: a color-coded ranking that breaks into three bands. A score of 61 and above lands in the green "generally favorable" zone. Scores from 40 to 60 land in the yellow "mixed or average" zone. Below 40 lands in the red "generally unfavorable" zone. These thresholds make a quick sanity check fast, but the specific number within each band still matters.

Of the three platforms, Metacritic is the closest to what I would call a professional critical consensus. It is a smaller, more curated signal, which means it has more variance on obscure films but is more reliable on anything with broad critical coverage. When Metacritic and Rotten Tomatoes agree on a score in the same general range, that is a particularly strong signal.

Where is each platform most biased?

Every rating system has structural biases baked in, and knowing them makes the numbers more useful, not less.

Rotten Tomatoes' biggest weaknesses:

- Binary collapse, as described above. The scale cannot distinguish between lukewarm approval and passionate enthusiasm.

- Sample-size variance on indie and foreign films. A film with 12 reviews where 10 are positive gets an 83 percent Tomatometer. That is not the same signal as an 83 percent built on 340 reviews.

- Audience Score manipulation. The Audience Score system has been used repeatedly by organized fan communities to either boost or bomb films before most people have seen them. This is less of a problem since Rotten Tomatoes introduced ticket verification, but the score still drifts from genuine audience reaction in politically charged releases.

- Recency bias in the critic pool. Reviews posted closer to the release date represent a different emotional reaction than reviews written six months later, and all of them count equally.

IMDb's biggest weaknesses:

- Demographic skew, particularly on films with female-skewing audiences or non-Western subject matter.

- Fan community distortion on franchise films. Marvel, DC, and Star Wars titles attract ratings from communities whose investment in the franchise is not representative of general viewers.

- The 10-star scale invites anchor bias. Some viewers reserve 10 for films they consider perfect and give everything else a 7. Others treat 7 as average. The weighting algorithm accounts for this partially, but not entirely.

Metacritic's biggest weaknesses:

- Legacy-critic weighting. The critics carrying the most weight on Metacritic tend to be at established print and digital publications. Critics with newer or smaller platforms are either excluded or down-weighted even if their reviews are thoughtful and well-regarded.

- Slow refresh on limited releases. Small films sometimes go weeks with 12 to 15 reviews and a score that is not yet stable. A single additional review can shift the score by three or four points.

- Occasional stale reviewer pool. Metacritic's approved critic list is not updated continuously, and some films are scored by critics who have not been active for years.

What do 40-point disagreements actually tell you?

A gap of 40 or more points between two of these scores is not a sign that someone is wrong. It is a specific kind of information about the film. The gaps cluster into a few recognizable patterns.

The fan-vs-critic split: Critics hated it, audiences loved it. This is the most common large gap, and it almost always signals a genre film aimed at a dedicated fan base that critics are not the target audience for. Batman v Superman: Dawn of Justice landed around 28 percent on Rotten Tomatoes, 44 on Metacritic, and 6.4 on IMDb (64 on the equivalent 100-point scale), a gap of 36 points between the critical consensus and the audience reception. Venom was similar: roughly 31 percent on Rotten Tomatoes, 35 on Metacritic, and 6.6 on IMDb. The critics were correct that both films had significant structural problems. The fans were also correct that they delivered something the target audience wanted. Both readings are accurate. They are measuring different things.

The critic-vs-fan split: Critics loved it, a portion of the audience resented it. Star Wars: The Last Jedi is the canonical case. It landed at 91 percent on Rotten Tomatoes and 84 on Metacritic. Its IMDb score is 7.9, which looks healthy, and there is an instructive reason for that. The fan backlash was intense enough to spawn organized vote-bombing campaigns against the film's IMDb rating. IMDb's weighting algorithm largely absorbed them. The score that captured the audience split more clearly was the Rotten Tomatoes Audience Score, which settled at 43 percent. That gap between 7.9 on IMDb and 43 percent on the RT Audience Score is itself a lesson: IMDb resisted the manipulation; RT's Audience Score, which has lighter anti-manipulation controls, did not. The critics appreciated what the film was trying to do narratively. A large segment of the audience felt it violated expectations built over decades. Both reactions were real. The platform you were looking at determined which one you saw. For a full framework on when to trust audience scores over critic scores — and when the gap between them is itself the signal — that breakdown is worth reading if you use these platforms regularly.

The broad comedy gap: Critics are demonstrably worse at evaluating broad comedies than almost any other genre. Films like Grown Ups and similar studio comedies routinely land at 10 to 20 percent on Rotten Tomatoes while scoring in the 55 to 65 range on IMDb. This is not the audience being wrong. It is critics applying a different set of criteria (formal construction, narrative ambition, originality) to a genre that was never designed to satisfy those criteria. If a comedy made you laugh, it succeeded, regardless of whether it used three-act structure correctly.

A 95 on Rotten Tomatoes and a 55 on Metacritic is not a contradiction. It is telling you what kind of film you are about to watch.

Here is a side-by-side comparison of a few films where the three scores tell notably different stories (scores approximate at time of writing):

| Film | RT Tomatometer | Metacritic | IMDb (x10) |

|---|---|---|---|

| Batman v Superman (2016) | 28% | 44 | 64 |

| Venom (2018) | 31% | 35 | 66 |

| The Last Jedi (2017) | 91% | 84 | 79 |

| Bohemian Rhapsody (2018) | 60% | 49 | 79 |

| Grown Ups (2010) | 10% | 30 | 60 |

Each of those rows has a story. Bohemian Rhapsody is a biopic that critics found formulaic (60 percent on RT, 49 Metascore) and audiences found deeply satisfying (7.9 on IMDb). That is useful information before you sit down with it. You are watching a crowd-pleaser that trades formal ambition for emotional momentum, and it delivers the thing it was designed to deliver very well.

Which score should you trust for which type of movie?

This is the practical question, and the answer depends on what you are trying to decide.

Trust Metacritic most for prestige drama, arthouse cinema, and Oscar contenders. The curated critic pool at Metacritic was built for exactly these genres, and the weighted 0 to 100 scale preserves the nuance that Rotten Tomatoes' binary system loses. A Metascore of 80 means serious critics took the film seriously. A Metascore of 55 means it divided them.

Trust IMDb most for genre films. Action, horror, thriller, sci-fi, franchise entries, and broad comedy are all categories where the IMDb user base has a deep and well-calibrated frame of reference. A 7.5 on an action film from the people who have seen every action film made in the last 30 years means something. These voters know the genre and can place the film accurately within it.

Use the Rotten Tomatoes Tomatometer as a quick pass/fail for critical reception. If the Tomatometer is above 70 percent, most critics liked it. Below 40 percent, most critics didn't. In the middle is genuinely mixed territory. Do not use it as a measure of enthusiasm or quality, because it was not built to measure those things.

Use the Rotten Tomatoes Audience Score cautiously. It is the most susceptible to manipulation and demographic skew of any score on this list, but it does contain a genuine signal about whether the paying audience left happy. Check it after a release has been out for a few weeks, when the opening-weekend boosters and detractors have been diluted by the broader audience.

When all three agree, pay attention. A film that scores 90 percent on Rotten Tomatoes, 88 on Metacritic, and 8.2 on IMDb has satisfied a professional critical consensus, an elite critic consensus, and a mass audience simultaneously, which is genuinely rare. Films that accomplish all three are worth prioritizing.

What is the honest read when all three disagree?

Treat them as three different signals about the same film rather than three attempts to answer the same question. When they disagree, you can usually construct a coherent picture from the combination.

High Rotten Tomatoes, low Metacritic, high IMDb: critics broadly recommended it, serious critics were not enthusiastic, general audiences loved it. This is a crowd-pleaser that works without being formally distinguished. Probably a fun watch. Probably not a film you'll be thinking about a week later.

Low Rotten Tomatoes, low Metacritic, high IMDb: critics hated it, genre fans loved it. This is a film for a specific audience that critics are not in. If you are that audience, ignore the critical scores entirely.

High Rotten Tomatoes, high Metacritic, low IMDb: critical consensus is strong, general audiences are disappointed. This usually signals a formally challenging or slow-burning film that requires patience and rewards it. The IMDb score is telling you about the gap between what the film was trying to do and what a portion of the audience expected it to do.

All three high: rare and worth noting. All three mediocre: a film that did not offend or delight anyone particularly, which is its own kind of data. The worst situation is all three low, where critics, elite critics, and the general audience all agreed the film failed. Those you can skip.

The one thing I would caution against is anchoring on a single score and ignoring the others. The person who only checks IMDb is going to systematically underestimate prestige drama. The person who only checks Rotten Tomatoes is going to systematically underestimate broad genre films and comedies. The person who only checks Metacritic is going to miss a lot of legitimately fun films that the established critical class never warmed to.

How do I look at all three at once without doing homework every time?

You can open three separate tabs and cross-reference manually, which is what I did for years. It is tedious enough that most people stop doing it and default to whichever single number they trust most, which is how you end up missing films you would have loved and watching films you should have skipped. If you want a starting point where all three platforms already agree, the 50 best films of the 21st century is built around exactly that — films with strong consensus across critic and audience scores.

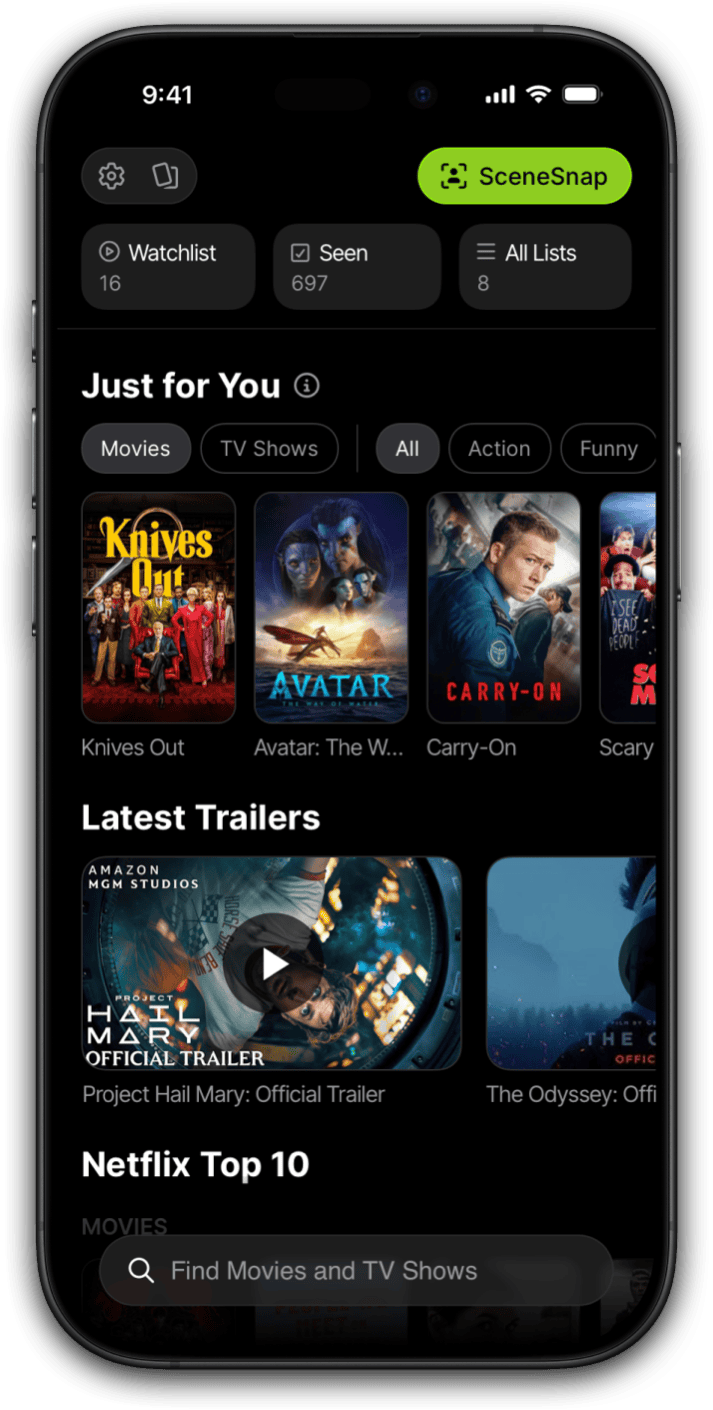

Limelight surfaces all four scores, including Rotten Tomatoes, IMDb, Metacritic, and TMDB, on a single detail page for every film and TV show. The combination I just described, checking all three and reading them together, is the default view. You do not need three apps or three tabs. The information is already there when you pull up a title to add to your Watchlist.

Understanding the methodology is worth doing once. After that, the goal is to make the right call about what to watch tonight without spending ten minutes doing research. That is the problem the three scores together actually solve, provided you can see them in the same place at the same time.

Frequently asked questions

Which movie rating site is the most accurate?

None of the three is objectively most accurate because they measure different things. Metacritic is closest to a true critical consensus, using a weighted average of a curated pool of professional critics. IMDb is the best read on general audience reception. Rotten Tomatoes tells you whether a majority of critics were positive, but says nothing about how positive they were.

Why does Rotten Tomatoes sometimes show two different scores?

The Tomatometer (the red or green percentage) reflects the share of approved critics who gave the film a positive review. The Audience Score is a completely separate system based on verified ticket buyers and general users rating the film after seeing it. The two scores measure entirely different populations with different incentives, which is why they often diverge significantly.

What does "Certified Fresh" actually mean on Rotten Tomatoes?

Certified Fresh requires a minimum of 80 reviews for wide-release films (40 for limited releases), a Tomatometer score of 75 percent or above, and at least five reviews from Top Critics. It is a consistency threshold, not a measure of how enthusiastic critics were. A film can be Certified Fresh without a single critic calling it extraordinary.

Why is IMDb's score different from what you might expect from audience reactions?

IMDb applies a proprietary weighting algorithm to its user ratings, specifically designed to resist vote-stuffing and brigading. The displayed score is not a simple average of all submitted ratings. IMDb also trims outliers and adjusts for demographics, so a film targeted by organized rating campaigns often ends up with a score closer to what neutral viewers gave it.

Does Metacritic weight reviewers differently from Rotten Tomatoes?

Yes. Metacritic assigns each critic in its pool a weight based on their perceived stature, and reviews score on a 0 to 100 scale rather than a binary fresh or rotten vote. This means a passionate four-star review counts differently from a barely-passing two-and-a-half-star review, which is closer to how a genuine critical consensus actually forms.

Which rating should I check before paying to rent a movie?

Check Metacritic for prestige drama, arthouse cinema, and Oscar contenders. Check IMDb for genre films, action, horror, and franchise entries. Cross-reference the Rotten Tomatoes Tomatometer for a broad yes-or-no on critical reception. If all three agree, you have a clear signal. If they split by 30 or more points, that gap itself tells you what kind of film you are about to watch.

Where can I see Rotten Tomatoes, IMDb, and Metacritic scores in one place?

Limelight shows Rotten Tomatoes, IMDb, Metacritic, and TMDB scores side by side on every movie and TV show's detail page. Free on iOS and Android, no ads at any tier.