Open on The Last Jedi (2017). Rotten Tomatoes: 91% critics, 42% audience. That 49-point gap generated more internet argument than the film itself. But here's the more interesting question: what does that gap actually mean? Is the film brilliant and misunderstood? Is it a critic-pleasing disappointment that betrayed its fanbase? Is the audience score depressed by organized brigading? The answer involves all three. Knowing how to read it changes how you watch films.

Most people look at a score and treat it as a verdict. It isn't. It's a snapshot of a specific population's response at a specific moment, collected through a specific methodology that has real limitations. The score is useful, but only if you understand what it's actually measuring. Once you do, the gap between critic and audience scores stops being confusing and starts being one of the most informative signals you have.

What does a Rotten Tomatoes critic score actually measure?

The Tomatometer is a binary percentage, not a rating average. A 91% means that 91% of reviewed critics gave the film a passing grade, not that the average grade was 91 out of 100. A critic who gives a film a 6 out of 10 and a critic who gives it a 9 out of 10 contribute equally to the Tomatometer. That structural fact matters more than most people realize.

Critics, in general, review for craft: cinematography, direction, script construction, performance, and formal originality. They also review for cultural moment, asking whether the film is doing something interesting relative to where cinema is right now. Both of these evaluative frameworks skew toward rewarding ambition even when execution is imperfect. A film that tries something bold and partly fails often scores better with critics than a film that executes a familiar premise flawlessly.

Who qualifies as a critic on Rotten Tomatoes also matters. The Tomatometer pool includes major national outlets, major regional papers, and a long tail of smaller publications. A consensus of 300 critics is a different thing than a consensus of 20, and the score doesn't visually distinguish between them. Metacritic operates differently: it uses weighted averages from a curated, smaller set of major outlets. That produces a more granular number but from a narrower sample. When you see a film at 91% on Rotten Tomatoes and 72 on Metacritic, you're not seeing a contradiction. You're seeing two different instruments measuring slightly different things.

What does an audience score actually measure?

On Rotten Tomatoes, audience scores shifted to Fandango-verified ticket buyer reviews in 2023. Before that change, anyone could submit a score without proof of having watched the film, which was the mechanism that made organized brigading so easy. The verified-buyer requirement helps, but it doesn't eliminate the problem entirely, and it introduces a different bias: people who buy tickets in advance tend to skew toward films they already want to see, which means presold enthusiasm is baked into the early numbers.

More fundamentally, audience scores measure satisfaction relative to expectation. That is a meaningfully different thing from evaluating a film on its own terms. A franchise film carries years of established expectations. When a new entry deviates from those expectations, even in a direction critics find formally interesting, the audience registers that deviation as a broken promise. The dissatisfaction is real and honest. It's just measuring something other than whether the film is well-made.

IMDb audience ratings add another layer of complexity. IMDb skews heavily male in its rater demographics, which produces systematic genre preferences in the aggregate scores. Action films, science fiction, and certain prestige dramas are consistently overrepresented at the top of IMDb rankings. Films with predominantly female audiences, foreign-language films, and certain genres associated with emotional directness tend to score lower not because they're worse but because the rating pool doesn't reflect the full range of people watching them.

When should you trust the critic score more?

Foreign-language films are the clearest case. Audiences systematically underrate subtitled films relative to their quality, and the gap is driven by accessibility friction rather than anything about the film itself. If you see a foreign-language film with a high critic score and a noticeably lower audience score, the most likely explanation is that a portion of the audience is penalizing the subtitles rather than the film. Critics don't have that bias, or at least not that particular one.

Art house and experimental cinema present a similar case. Critics have the context to evaluate formal choices that might look like flaws to an audience without that background. A deliberately fragmented narrative structure, an unresolved ending, an actor giving a performance that resists conventional emotional legibility: critics can distinguish between a choice and a failure. Audience scores often can't, and they reflect that. Films like First Reformed, Under the Skin, or anything from Terrence Malick's post-Tree of Life career are better navigated by critic scores than by what an audience expecting conventional storytelling found on a Friday night.

Films with slow pacing are another reliable case for trusting critics over audiences. Critics are trained to sit with a film and evaluate how it uses time. Audiences rate their experience, and patience is not uniformly built in. A 140-minute film that earns every minute will score lower with audiences than a 90-minute film that moves fast and means less.

When should you trust the audience score more?

Pure genre entertainment is where audience scores most reliably outperform critics. Horror, action, and comedy are all genres where the relevant question is "does this deliver on what it promises?" rather than "does this advance the formal possibilities of cinema?" Critics sometimes penalize films for being exactly what they set out to be. An audience that came for a well-executed slasher film and got one will rate that film accurately. A critic who would prefer a more ambitious use of the genre will not.

Franchise sequels also tend to favor audience scores, with an important caveat. When critics review the 12th entry in a franchise without having followed entries one through 11 closely, they're missing context that shapes everything: the character arcs, the established rules of the universe, the in-jokes, the weight of a callback to something that happened four films ago. An audience that has invested in a franchise can evaluate whether a new entry honors that investment. Critics often can't.

The caveat: franchise audiences can also be the harshest judges when a film disappoints expectations. The same investment that lets them recognize a genuinely good franchise film also makes them register a betrayal of tone or character more intensely than any critic would. Audience scores for franchise films are high-signal either way, they just require more interpretation than a score for a standalone film.

Comfort cinema is a final case for trusting the audience. Films designed to deliver a familiar emotional experience reliably, a holiday film that hits all the expected notes, a feel-good underdog story that doesn't subvert anything, are often dismissed by critics as slight or formulaic. Audiences know exactly what they signed up for and rate whether it worked. For that category of film, critics are measuring the wrong thing.

When should you ignore both scores?

When a score gap is explained by organized brigading, both the raw number and the gap become unreliable. The most common culprits are DC and Star Wars fandoms, which have a documented history of coordinating to tank films perceived as betrayals of the property, and politically charged films where motivated groups rate based on agreement or disagreement with the subject matter rather than anything about the filmmaking. Knowing the film's cultural context tells you whether brigading is likely, and that context is always more useful than the score itself.

Small review counts are a quieter version of the same problem. A 95% on 18 reviews is not the same as a 95% on 400. The score is technically accurate, but the sample is too small to mean much. Rotten Tomatoes displays review counts alongside scores, and I check that number routinely for any film that looks like an outlier.

Early release scores also require caution. A film that opens at 98% in its first week of festival screenings is being scored by a self-selected audience of people who sought it out, often people who already had enthusiasm for the director or subject. That number will typically settle lower as the audience broadens. The inverse is also true: early poor audience scores for wide releases can reflect lower-commitment viewers who showed up on opening weekend without strong pre-existing interest.

And then there's the most important case for ignoring both scores: when you already know the film is for you. If a director you love made a film, the aggregate opinion of people who may not love that director's work is close to irrelevant. Scores are decision tools for uncertainty. When there's no uncertainty, they're noise.

The gap between critic and audience scores is more informative than either number alone. It tells you about the film's relationship to its audience, which is often the only thing you actually need to know.

What does the gap between critics and audience actually signal?

A large positive gap (critics higher than audience) usually means the film prioritizes craft and ambition over satisfaction. It asks something of the viewer. It may be formally unusual, narratively uncomfortable, or paced in a way that requires patience. That's not a warning, it's a description. Whether that description appeals to you depends on what you're looking for tonight.

A large negative gap (audience higher than critics) usually means the film delivers on its genre promise at a level critics are dismissing. The audience is responding to something real. Critics may be evaluating the film against a standard the film was never trying to meet. That's the gap that most reliably identifies films worth watching in a given genre even when critical reception is lukewarm.

The Last Jedi sits at the intersection of all these effects. There was genuine audience dissatisfaction from viewers who felt the film mishandled characters they'd been following since 2015. There was organized score manipulation by a subset of fans who coordinated to depress the audience rating. And there were critics who rewarded the film's formal ambition in a franchise context where that ambition was always going to alienate viewers who came for a different kind of film. All three things are simultaneously true. The score reflects all of them compressed into a single number, which is why a single number can't tell you any of them.

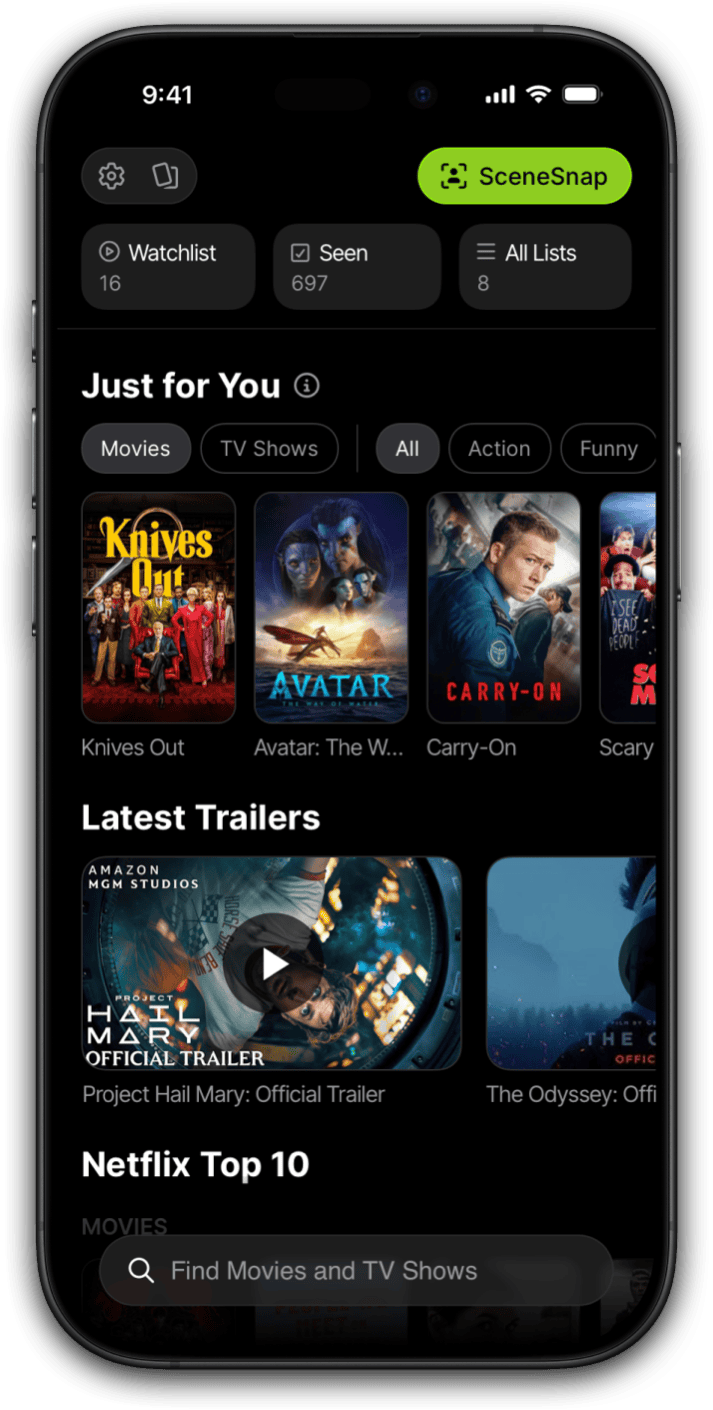

How does Limelight handle multiple scores?

Limelight displays Rotten Tomatoes critics, audience score, Metacritic, and IMDb side by side on every film and TV show. The point is to give you the gap at a glance rather than requiring you to cross-reference four separate sites. You can read the relationship between scores directly and apply whichever framework fits what you're trying to decide. From there, the call is yours.

The deeper point is that no score is a verdict and no score is noise. Each one is a measurement from a specific instrument with specific limitations. Understanding the limitations is what turns a number into information. A 91% and a 42% aren't contradicting each other. They're telling you two different things about a film that means very different things to two very different groups of people, and that's actually the most useful thing a score can tell you.

Frequently asked questions

Which score should I use to decide whether to watch a film?

Neither score alone is sufficient. The most useful signal is the gap between them. A large gap tells you something about who the film was made for and whether it delivered on its premise. Read the gap first, then look at the individual scores for context. If both are high and the gap is small, that's a reliable indicator across most audiences.

Why are critic scores and audience scores so different for franchise films?

Franchise films create specific expectations in their audience. When a film deviates from those expectations, even in a direction critics find artistically interesting, the audience registers it as dissatisfaction. Critics are often reviewing the film as a standalone piece of cinema rather than as the 12th entry in a series they may not have followed closely. These are genuinely different evaluative frameworks, and they produce different results.

Is Metacritic better than Rotten Tomatoes?

They measure different things. Rotten Tomatoes gives you a binary fresh/rotten percentage across a broad pool of critics. Metacritic uses weighted averages from a curated set of major outlets, which produces a more granular score but from a narrower sample. Metacritic is better for understanding how enthusiastically critics received a film. Rotten Tomatoes is better for knowing whether a broad critical consensus exists. Using both together gives you a more complete picture.

Can audience scores be faked or brigaded?

Yes, and it has happened repeatedly. Organized fan groups have coordinated campaigns to tank audience scores for films they felt betrayed their franchise, and to inflate scores for films they wanted to defend. Rotten Tomatoes moved to Fandango-verified ticket buyer scores in 2023 to address this, which reduces but does not eliminate the problem. Political films and films attached to large, organized fandoms are the categories most vulnerable to score manipulation.

What's a good aggregated score threshold for a film worth watching?

There is no universal threshold, because the right threshold depends on the genre and your personal tolerance. A 70% on Rotten Tomatoes for a horror film means something different than a 70% for a prestige drama. A useful heuristic: above 75% critics with a gap under 20 points is a reasonably safe watch across most genres. Below 50% critics with a large audience gap in the positive direction suggests a genre film that critics are undervaluing. Under 30% critics and under 40% audience is a hard pass in most cases.