I want to be precise about what I mean when I say streaming algorithms fail you. I'm not saying Netflix's recommendation engine is poorly engineered. It's almost certainly among the most sophisticated recommendation systems ever built at consumer scale, and it is extraordinarily good at its actual job. The problem is that its actual job is not what you think it is when you open the app on a Friday night trying to find something worth watching.

The algorithm is optimized for a specific outcome: keeping you subscribed. That objective produces a system that is very good at getting you to click something within the first 90 seconds you're on the platform, and reasonably good at keeping you watching long enough not to cancel your subscription. What it is not optimized for is finding you the film that's exactly right for tonight, or surfacing the genuinely great film that's buried three carousels down because it doesn't have the studio backing to compete for placement.

These are different objectives, and understanding the gap between them is the key to understanding why the algorithm consistently disappoints serious film fans while simultaneously keeping hundreds of millions of people subscribed.

What does a streaming recommendation algorithm actually optimize for?

Start with the Netflix 97% match badge, which appears on nearly everything in your feed regardless of how little behavioral data Netflix has to support it. The badge was not designed to provide accurate predictions. It was designed to reduce scroll paralysis.

Netflix's internal research identified a critical window: users who can't find something to watch within 60 to 90 seconds have measurably higher churn rates. That finding shaped the entire product. The homepage carousel, the autoplaying trailers, the match percentage badge, the "Top 10 in the U.S. today" row: each of these features is a mechanism for generating a click within that 90-second window. Whether that click leads to a satisfying viewing experience is a secondary concern. Getting the click is the primary one.

Netflix has never disclosed a methodology for how match percentages are calculated. Researchers and journalists who have investigated it consistently arrive at the same conclusion: the number is calibrated to produce confidence, not accuracy. A 97% match badge on a film you're likely to abandon after 20 minutes has done its job if it gets you to start watching. The algorithm records that as engagement. The fact that you stopped is logged as a separate data point that feeds back into future predictions, but the badge itself was never designed to prevent that outcome. It was designed to prevent you from canceling your subscription before you find something.

"Getting the click" and "finding the best film for this person tonight" are related but genuinely different objectives. A system optimized for the first will sometimes produce the second as a byproduct. But the two objectives diverge precisely in the cases where you most need good recommendations: when you're looking for something specific, something challenging, something outside what you've watched before. Those are the moments the algorithm is least equipped to help you, because they require stepping outside the behavioral pattern that its predictions are built on.

What is the tyranny of the carousel?

The carousel interface is the defining UX pattern of every major streaming platform, and it is not a neutral design choice. Each row on a Netflix or Max or Disney+ homepage is a prioritization decision made by someone with a financial interest in what you click. The order of those rows, the films that appear in them, and the titles that lead each row are not editorial judgments about what you'll love most. They are outputs of a system that blends algorithmic prediction with commercial negotiation.

The top row is typically the content with the most studio investment behind it: the new original, the expensive exclusive, the film with a sequel in production that the platform needs you to know about. The carousel creates a hierarchy that appears editorial but is primarily commercial. Library content from three years ago, a film that got a quiet release with minimal marketing, a foreign-language film that didn't have an awards campaign: these titles compete at a structural disadvantage for every position in the carousel relative to whatever the platform currently has a financial stake in promoting.

The visual design reinforces this. Autoplaying trailers start when you hover. Large thumbnail art for promoted titles crowds out smaller entries. The scroll direction pulls you across rows of similar content rather than down into depth. The interface is built to create the sensation of choice while narrowing the actual range of what you're choosing between. You're seeing not what the platform thinks you'll love most, but what the platform most needs you to watch. Those two things overlap more than you might expect, which is why the system doesn't feel obviously broken. But they diverge often enough that the gap compounds over time into a real frustration.

Why do platforms push originals ahead of better library content?

The economics are specific and worth understanding. When a streaming platform produces an original, it owns the content outright. There is no per-stream royalty, no residual, no licensing renewal. The upfront cost is fixed, and every subsequent view is pure return on that investment. When a platform licenses a film from a studio or a distributor, it typically pays based on subscriber count or stream volume, and that cost compounds with success. A licensed film that becomes unexpectedly popular becomes unexpectedly expensive.

An original that gets 10 million views is a successful asset that the platform owns permanently. A licensed film that gets 10 million views is an ongoing cost. The algorithm is therefore financially incentivized to surface originals ahead of library content, regardless of comparative quality, because the business math is better when you watch the original.

Netflix has been relatively transparent about this. Reed Hastings and other Netflix executives have discussed publicly how the platform's investment in originals is designed to shift the content mix away from licensed titles, which carry increasing costs as the platform scales. The algorithm is one of the mechanisms through which that shift happens. Originals get placed higher in carousels, recommended more aggressively, and flagged more prominently in search results than comparable licensed films.

The problem this creates for viewers is straightforward: every time a licensed film is better than the original being promoted alongside it, the algorithm is actively directing you away from the better film. This happens constantly. The platform's originals catalog contains a wide range of quality, and the licensed library of every major streaming service contains films that are demonstrably better than many of those originals. The algorithm, by design, doesn't make that comparison. It makes a different comparison, one based on which film is better for the platform's business, and surfaces the answer to that question instead.

Why does collaborative filtering fail for film discovery?

Collaborative filtering is the "viewers who watched X also watched Y" model that forms the backbone of most streaming recommendation engines. The idea is that behavioral patterns across large populations contain useful information about what any individual viewer will like next. If people who watched "Parasite" consistently went on to watch "Oldboy," the system learns that connection and surfaces "Oldboy" to anyone who watches "Parasite."

The structural limitation for serious film discovery is that collaborative filtering amplifies existing popularity distributions. A film seen by 5 million people produces strong signal: the system has millions of behavioral data points to learn from. A film seen by 50,000 people produces weak signal. The algorithm doesn't have enough data to learn reliable patterns from it, which means it gets recommended less, which means fewer people see it, which means the signal stays weak. This is a self-reinforcing loop that perpetuates the existing popularity hierarchy rather than surfacing quality.

A genuinely great but underseen film loses to a mediocre but widely watched film every time in a pure collaborative filtering model. The algorithm has no variable for quality that is independent of audience size. It can't evaluate whether a film is better than its viewership suggests. It can only work with what the data tells it, and the data tells it to recommend popular things to people who watch popular things. That's a reasonable heuristic for keeping subscribers engaged. It's a terrible heuristic for discovery.

The films that suffer most are the ones that deserve discovery most: foreign-language films that didn't get marketing campaigns, independent films that got limited distribution, older films that have never accumulated the modern behavioral data that would make them visible in a collaborative filtering system. These are precisely the films that a serious film fan would most want to find. The algorithm is structurally incapable of helping them do that.

What does behavioral data miss about your actual taste?

Behavioral data records what you watch, not what you want to watch. Those are different things, and the gap between them is where the algorithm consistently fails.

If you've spent three weeks watching procedural crime dramas because you were exhausted after work and needed something that required nothing from you, the algorithm now classifies you as a procedural crime drama fan. It has no mechanism to distinguish "what I watch when I'm depleted" from "what I actually love." The next time you open the platform, it will serve you more procedurals, because that's what the behavioral data supports. The fact that you might have been hoping to finally watch something more demanding is invisible to it.

The algorithm also has no mechanism to capture aspiration: the films you wish you could find but can't. If a friend mentions a Korean director you've never heard of, and you want to explore their work, the algorithm doesn't know that. It only knows what you've already done, not what you intend to do. It learns from behavior; it can't learn from desire.

There's a third category of data the algorithm structurally misses: the films that never had enough distribution to generate behavioral data in the first place. A film that played at Sundance, got picked up by a small distributor, and had a three-week theatrical run in six cities before landing on a streaming platform with minimal marketing may be genuinely extraordinary. But if only 8,000 people have streamed it, the algorithm doesn't have enough signal to do anything useful with it. The film is invisible not because viewers didn't want to find it, but because the distribution pipeline that would have created the behavioral data to make it visible never existed.

What's the alternative to the algorithmic carousel?

Platform-agnostic discovery tools, human curation, and explicit filtering. Each of these approaches sidesteps the fundamental problem with the algorithmic carousel: that the results you see are shaped by the platform's financial interests rather than your actual preferences.

Human curation works because curators with genuine taste in a specific territory know things the algorithm doesn't: which films are better than their audience size suggests, which director's earlier work is worth tracking down, which festival section has historically produced the best films in a given genre. A well-constructed Letterboxd list from a thoughtful curator will outperform the Netflix homepage for discovery purposes every time, because the curator's goal is to share films they believe in, not to keep you subscribed.

Explicit filtering works because it bypasses the algorithm's weighting entirely. If you can search by runtime, genre, decade, mood, and streaming service simultaneously, you get results that match your stated preferences rather than the platform's prediction of what you'll click. The results are unweighted by studio investment, by original versus licensed status, by completion-rate data from other users. You see what matches your criteria.

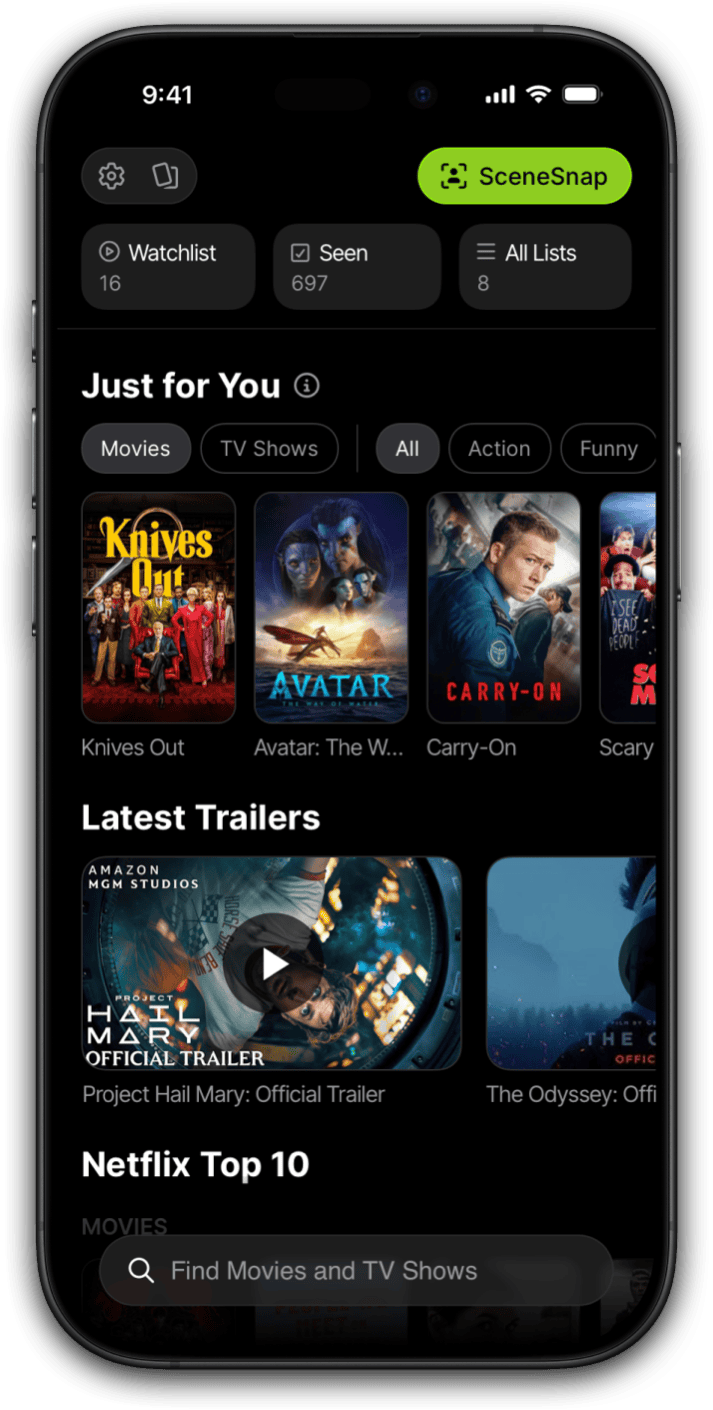

I built Limelight because the algorithmic recommendation experience on every major platform fails in the same way: it's filtered through the platform's business interests rather than the viewer's preferences. Limelight's approach is different. You set filters, search for films by name or description, and save what you find to a watchlist that travels with you across services. Nothing in Limelight is weighted by which studio paid for placement. The results you see are filtered by your explicit preferences, not by a system optimized to reduce churn on someone else's platform.

An algorithm that keeps you subscribed and an algorithm that finds you the best film for tonight are related problems. They are not the same problem, and every major streaming platform has chosen to build the first one.

The useful reframe is this: the streaming algorithm is a retention tool that has been marketed as a discovery tool. Once you understand that distinction, the experience of using it makes more sense. It will occasionally surface something you love, and that's enough to keep most subscribers paying. But it will consistently fail to surface the films that would most change your relationship with cinema, because those films are precisely the ones that don't perform well on the metrics the algorithm was built to optimize.

The alternative is not to abandon streaming platforms. They have enormous libraries and the economics are genuinely good. The alternative is to do your discovery somewhere else and then bring your watchlist back to the platform to watch. Decouple the discovery step from the platform's homepage, and the carousel's grip on what you end up watching loosens immediately.

Frequently asked questions

What does Netflix's 97% match rating actually mean?

Netflix has never disclosed a public methodology for its match percentage. The internal consensus among researchers and journalists who have investigated it is that the badge functions primarily as an anti-scroll mechanism: it's designed to create enough confidence to produce a click within 60 to 90 seconds, the window Netflix has identified as critical for reducing churn. The percentage is not a peer-reviewed prediction score. It's a number calibrated to reduce scroll paralysis, not to accurately forecast your satisfaction with a film.

Why does Netflix keep recommending things I've already seen or don't want to watch?

The recommendation algorithm learns from behavioral signals: watch-through rate, completion rate, and engagement history. It doesn't have a reliable mechanism to distinguish between "I watched this because I wanted to" and "I watched this because it was on." It also doesn't read your mood, your aspiration, or your actual considered taste. If you've spent two weeks watching genre content because you were exhausted, the algorithm now classifies you as a genre fan and will serve you more of the same, regardless of what you actually care about.

Do streaming platforms favor their own original content in recommendations?

Yes, and this isn't a secret. Netflix has acknowledged that its algorithm weights content based on the platform's financial interest in it. Originals, which Netflix owns outright, have a better return on investment when watched than licensed films, which come with per-stream costs that compound with success. The algorithm therefore has a structural financial incentive to surface originals ahead of library content, regardless of comparative quality. This is a business decision, not an editorial one, and it diverges from viewer interest every time a licensed film is better than the original placed alongside it.

What is collaborative filtering and why does it fail for niche film discovery?

Collaborative filtering is the "viewers who watched X also watched Y" model that forms the backbone of most streaming recommendation engines. Its structural limitation for serious film discovery is that it amplifies existing popularity distributions. A film seen by 5 million people produces strong signal; a film seen by 50,000 produces weak signal. The algorithm therefore perpetuates the popularity hierarchy rather than surfacing quality. A genuinely great but underseen film loses to a mediocre but widely watched one every time in a collaborative filtering model, because the algorithm has no variable for quality independent of audience size.

Is there a streaming service with genuinely good recommendations?

No major streaming platform has solved this problem, because the structural conflict between platform business interests and viewer discovery interests hasn't been resolved. The platforms that come closest tend to be smaller, curation-forward services like MUBI, which uses a rotating editorial selection rather than an algorithm. For mainstream platforms, the recommendation engine will always be filtered through the platform's business priorities. The most reliable alternative is platform-agnostic discovery: using tools that let you filter by your own explicit preferences without any platform's financial interests shaping what you see.